Top Builders

Explore the top contributors showcasing the highest number of app submissions within our community.

Anthropic

Anthropic’s Constitutional AI training approach research focuses on developing AI systems safe by design and aligned with human values. By prioritizing safety, we can create strong and corrigible AI systems that are safe for humans to use.

| General | |

|---|---|

| Company | Anthropic |

| Founded | 2021 |

| Discord | https://discord.gg/lablab-ai-877056448956346408 |

Anthropic Claude

Claude is your friendly and versatile AI language model that can assist you as a company representative, research assistant, creative partner, or task automator.

Claude is Safe, Clever, and Yours. Built with safety at its core and with industry leading security practices, Claude can be customized to handle complex multi-step instructions and help you achieve your tasks.

You can easily use Claude for your app, and all necessary APIs, boilerplates, tutorials explaining how to do so and more, you can find on our Claude tech page.

Claude Code

Claude Code is a command-line tool from Anthropic for agentic coding. It enables Claude to refactor, debug, and manage code directly in the terminal. You can find more information on our Claude Code tech page.

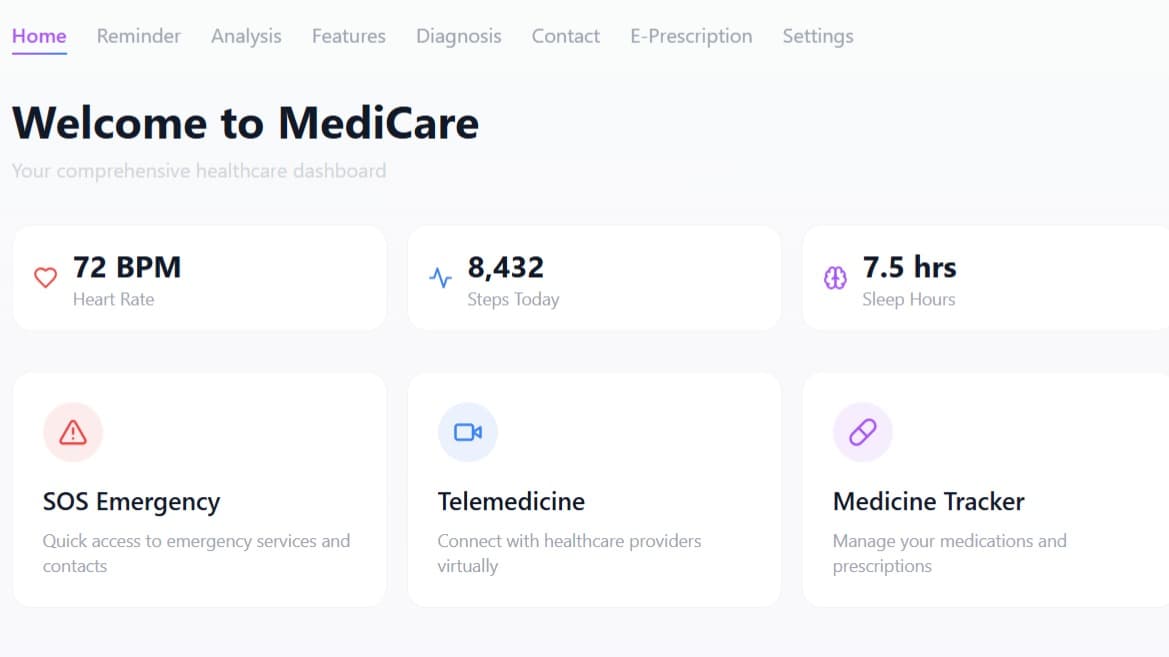

Anthropic AI Technologies Hackathon projects

Discover innovative solutions crafted with Anthropic AI Technologies, developed by our community members during our engaging hackathons.