Top Builders

Explore the top contributors showcasing the highest number of app submissions within our community.

GPT4All

GPT4All is an open-source ecosystem of on-edge large language models that run locally on consumer-grade CPUs. It offers a powerful and customizable AI assistant for a variety of tasks, including answering questions, writing content, understanding documents, and generating code.

GPT4All is supported and maintained by Nomic AI, which aims to make it easier for individuals and enterprises to train and deploy their own large language models on the edge.

| General | |

|---|---|

| Release date | 2023 |

| Author | Nomic AI |

| Type | Natural Language Processing |

Start building with GPT4All

To start building with GPT4All, visit the GPT4All website and follow the installation instructions for your operating system.

GPT4All Libraries

A curated list of libraries to help you build great projects with GPT4All.

- GPT4All Website

- GPT4All Documentation

- Python Bindings

- Typescript Bindings

- GoLang Bindings

- C# Bindings

GPT4All Examples

For more information on GPT4All, including installation instructions, technical reports, and contribution guidelines, visit the GPT4All GitHub repository.

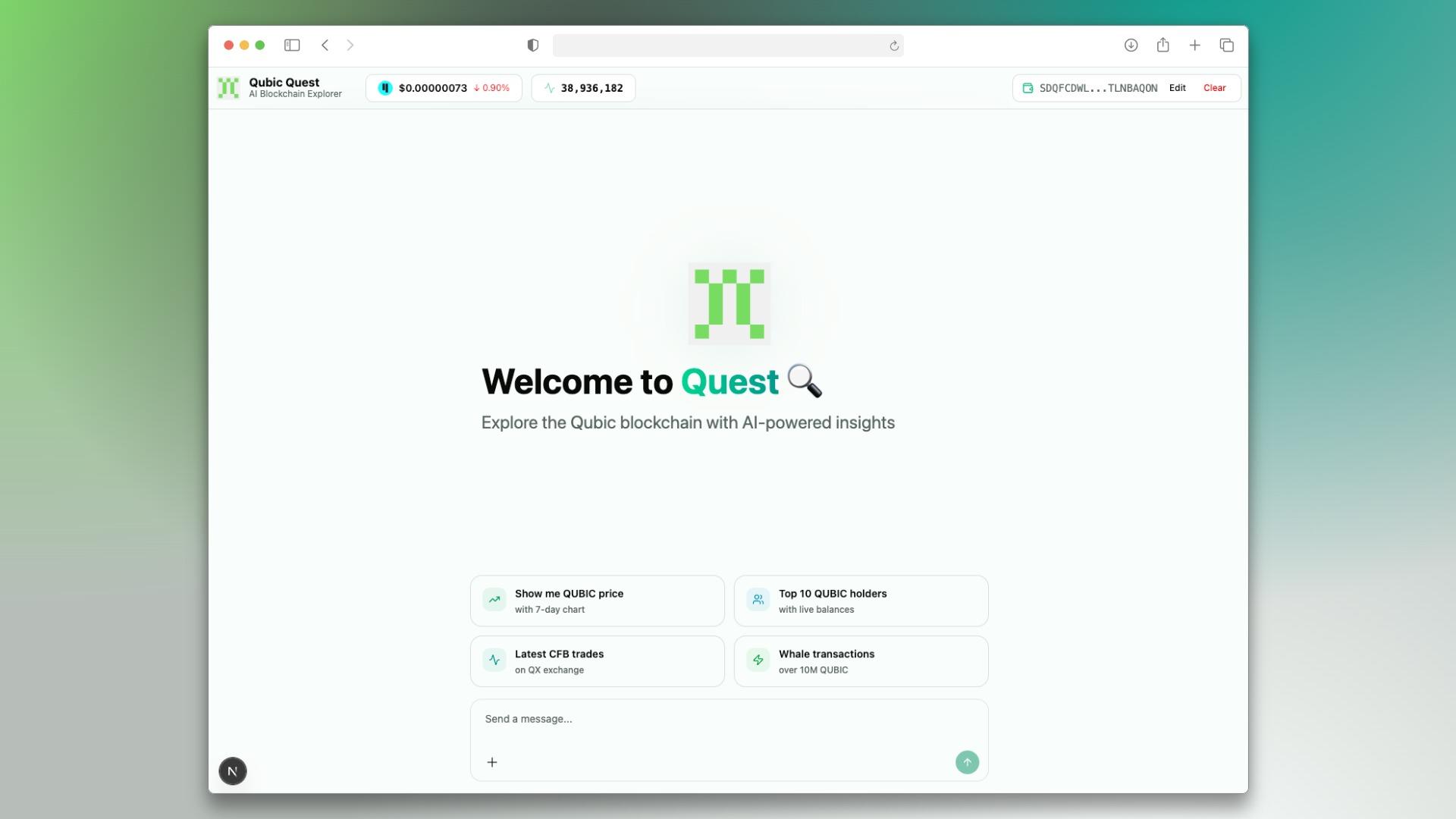

NomicAI gpt4all AI technology Hackathon projects

Discover innovative solutions crafted with NomicAI gpt4all AI technology, developed by our community members during our engaging hackathons.