Top Builders

Explore the top contributors showcasing the highest number of app submissions within our community.

AMD Developer Cloud

AMD Developer Cloud is a cloud-based GPU platform that gives developers on-demand access to AMD Instinct accelerators. It is designed for AI researchers, engineers, and builders who need high-memory GPU compute for training, fine-tuning, and inference without managing physical hardware. Members of the AMD AI Developer Program receive $100 in credits to start building immediately.

| General | |

|---|---|

| Author | AMD |

| Type | Cloud GPU Platform |

| Access | AMD AI Developer Program |

| Documentation | AMD Developer Cloud Overview |

| Hardware | AMD Instinct MI300X (192GB HBM3) |

| Pricing | $100 credits for Developer Program members; pay-as-you-go available |

Start building with AMD Developer Cloud

AMD Developer Cloud lets you access AMD Instinct MI300X GPUs through a simple cloud interface, so you can focus entirely on building rather than configuring infrastructure. The MI300X features 192GB of HBM3 memory, making it practical for running 70B+ parameter models on a single instance without model parallelism. Sign up for the AMD AI Developer Program to claim your credits and start running workloads today. Explore what the community has already built on AMD at AMD Use Cases and Applications.

AMD Developer Cloud Tutorials

Getting Started

- AMD AI Developer Program Sign up to access $100 in cloud credits and AMD Instinct GPU instances

- AMD Developer Cloud Overview Platform documentation and getting started guide

- ROCm Documentation Software stack reference for running AI workloads on AMD GPUs

- From Zero to AI Builder with AMD Free training courses and hands-on labs with real GPU access

Key Use Cases

Fine-tuning LLMs Use AMD Instinct MI300X instances to fine-tune open-source models such as Llama, DeepSeek, Mistral, and Qwen using PyTorch and ROCm. Hugging Face Optimum-AMD provides optimized training pipelines for AMD hardware.

Large model inference The MI300X's 192GB HBM3 memory capacity supports running very large models on a single GPU, reducing the need for multi-GPU serving setups.

Benchmarking and prototyping Test AI workloads on AMD hardware before moving to on-premises infrastructure. The pay-as-you-go pricing keeps experimentation costs low.

Hackathon development During AMD-sponsored hackathons on lablab.ai, participants receive cloud credits to access AMD GPUs directly through AMD Developer Cloud. Explore upcoming AI hackathons to find events using AMD infrastructure.

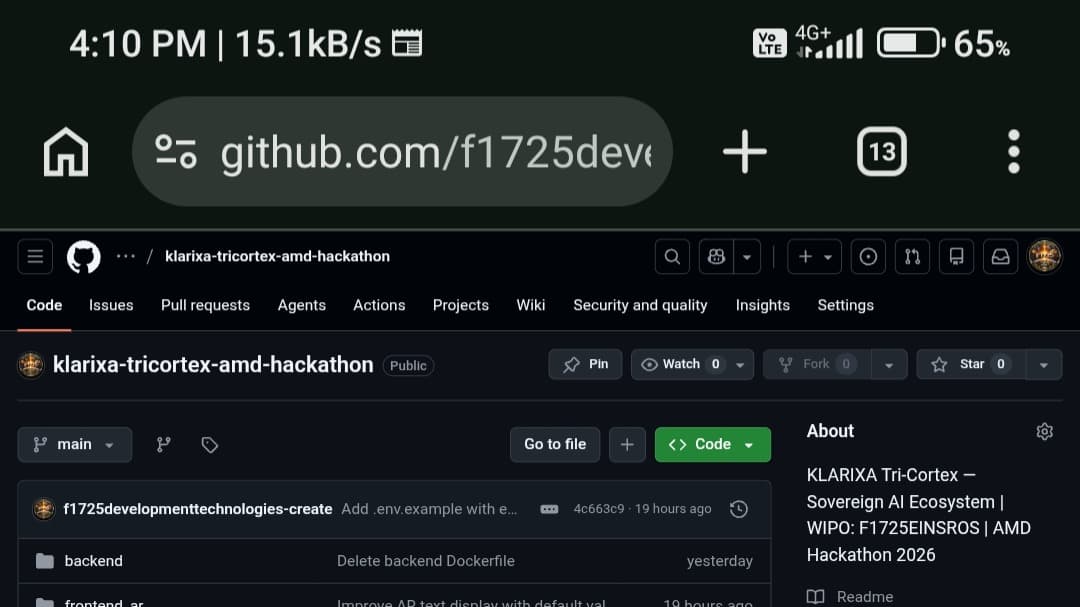

amd AMD Developer Cloud AI technology Hackathon projects

Discover innovative solutions crafted with amd AMD Developer Cloud AI technology, developed by our community members during our engaging hackathons.