Top Builders

Explore the top contributors showcasing the highest number of app submissions within our community.

Hugging Face Spaces

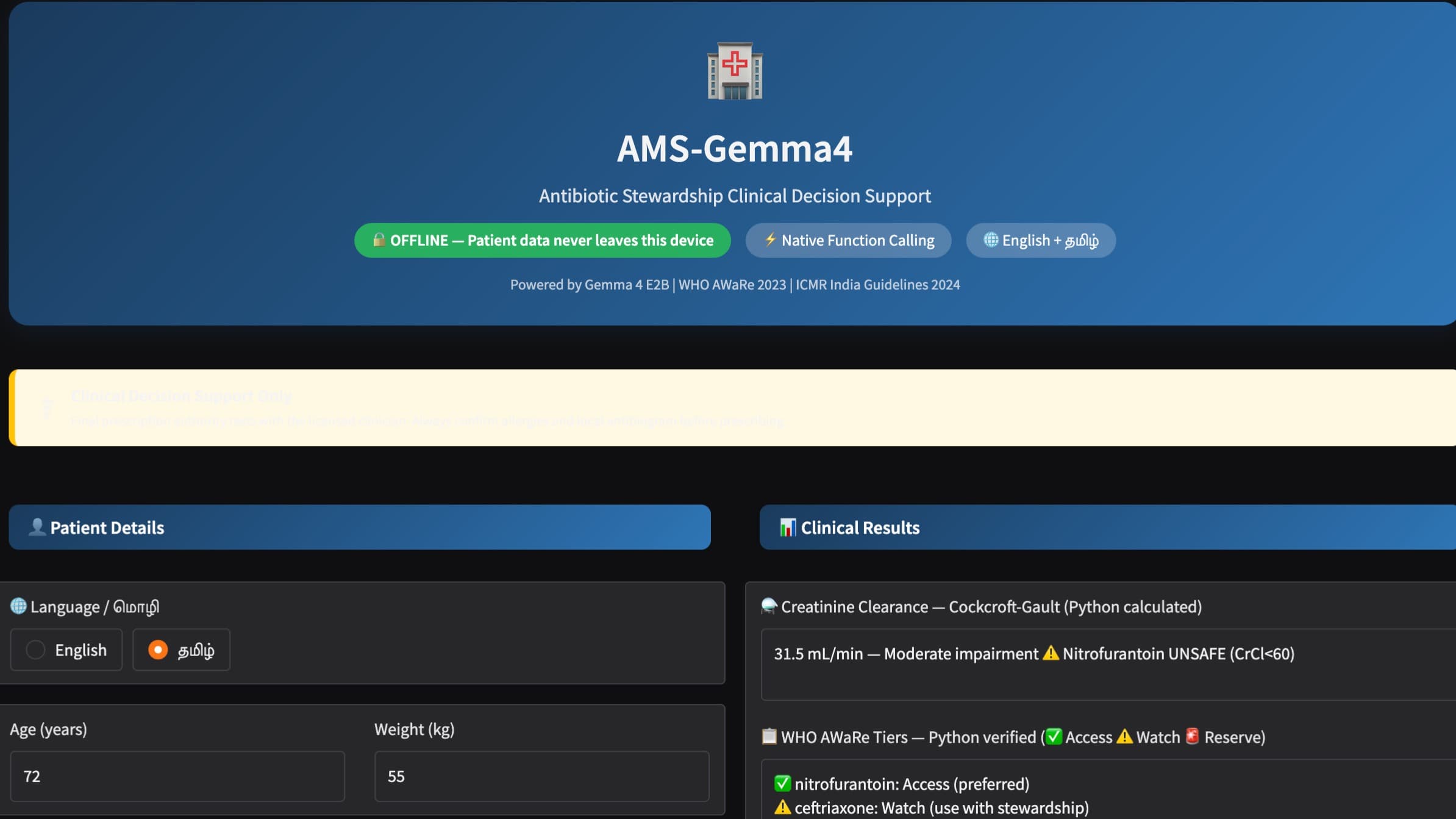

Hugging Face Spaces is a hosting platform for interactive machine learning applications. Developers build demos and tools using Gradio or Streamlit, then deploy them on Hugging Face infrastructure and receive a public URL. Spaces are free to run on shared CPU instances and can be upgraded to GPU-backed hardware for workloads that need faster inference. They are widely used during hackathons and AI events to share working prototypes without managing any server infrastructure.

| General | |

|---|---|

| Author | Hugging Face |

| Type | AI Application Hosting Platform |

| Website | huggingface.co/spaces |

| Documentation | Spaces Documentation |

| Hardware Options | CPU (free), T4, A10G, A100, H100 |

| Frameworks | Gradio, Streamlit, Docker |

Start building with Hugging Face Spaces

Spaces is the quickest path from a working model to a shareable demo. Connect a model from the Hub, wrap it in a Gradio interface, and push to a Space — the application goes live with a public URL in minutes. GPU instances are available on an hourly basis for workloads that need real compute. During hackathons on lablab.ai, submitting a Hugging Face Space link is a standard way to present a working project. Spaces created under an event's Hugging Face organization are publicly discoverable, and community members can vote with likes. Explore examples at Hugging Face Use Cases and Applications.

Hugging Face Spaces Tutorials

Getting Started

- Spaces Overview How to create, configure, and deploy a Space

- Gradio Documentation Build interactive UIs for ML models with minimal code

- Streamlit Documentation Alternative framework for building data and ML apps

- Spaces GPU Instances Upgrade a Space to GPU-backed hardware for faster inference

- Docker Spaces Deploy any containerized application as a Space

Key Features

Instant deployment Push a Gradio or Streamlit app to a Space repository and get a live URL without any server configuration or DevOps setup.

GPU hardware tiers Upgrade to T4, A10G, A100, or H100 instances for workloads that need GPU acceleration. Pricing is hourly with no long-term commitment.

Organization Spaces Create Spaces under a team or event organization so project submissions stay grouped and discoverable by judges and community members.

Persistent storage Attach a storage volume to a Space for stateful applications that need to read or write files between requests.

Community discovery Spaces are publicly indexed on Hugging Face and sortable by likes, making them a practical way to share and showcase AI projects.

Boilerplates

- Gradio Chatbot Template Starter Space for building a conversational AI interface

- Streamlit App Template Starter Space for data and model visualization apps

huggingface HuggingFace Spaces AI technology Hackathon projects

Discover innovative solutions crafted with huggingface HuggingFace Spaces AI technology, developed by our community members during our engaging hackathons.