Bakhtawar Iftikhar@bk_ifti251

2

Events attended

2

Submissions made

1 year of experience

About me

I'm a graduate electrical engineer with a postgraduate degree in AI. My previous experience was working for a sports-tech startup, developing their ML/CV pipeline to analyze and predict errors in bowling movements. I also have experience with Agentic AI, primarily building with LangChain.

🤝 Top Collaborators

🤓 Latest Submissions

Adaptifleet

Traditional warehouse automation has improved efficiency, yet many systems remain rigid, expensive, and difficult to adapt when workflows or layouts change. Even small adjustments often require specialized expertise or time-consuming reprogramming. This creates a disconnect between what operators need robots to do and how easily they can communicate those needs — a challenge we call the “Human Intent Gap.” AdaptiFleet was designed to close this gap by enabling intuitive, AI-driven fleet control. Instead of relying on complex interfaces or predefined scripts, users interact with autonomous robots using natural language. Commands such as “Get me three bags of chips and a cold drink” are interpreted and translated into structured robotic tasks automatically. At its core, AdaptiFleet leverages Gemini-powered Vision Language Models (VLMs) to understand user intent and visual context. Robots operate within a dynamic decision framework, allowing them to adapt to changing environments rather than follow rigid, pre-programmed routes. The platform integrates a digital twin simulation stack built on Isaac Sim, enabling teams to validate behaviors, test workflows, and optimize multi-robot coordination before live deployment. Once deployed, ROS2 and Nav2 provide robust navigation, dynamic path planning, and collision avoidance. The VLM orchestration layer continuously analyzes visual inputs to support scene understanding, anomaly detection, and proactive hazard awareness. When conditions change, AdaptiFleet autonomously re-plans routes and tasks, reducing downtime and operational disruption. By combining conversational interaction, real autonomy, and simulation-driven validation, AdaptiFleet simplifies robotic deployments while improving efficiency and visibility. The result is an automation system that is adaptive, scalable, and aligned with how people naturally work.

15 Feb 2026

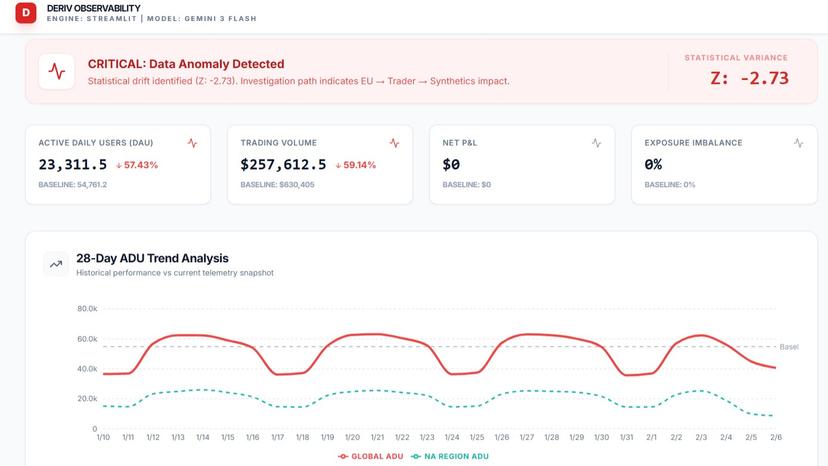

DAIA - Deriv AI Analyst

DAIA (Deriv AI Analyst) is a real-time enterprise observability platform built for Deriv's business operations. It monitors Active Daily Users, Trading Volume, Regional Performance, Platform Health, and Instrument Activity, automatically detecting anomalies requiring executive attention. The system uses a 4-layer intelligence pipeline: a Correlation Engine for streaming telemetry, an LLM Reasoning Agent for contextualizing deviations, a Severity Scorer using z-score analysis, and an Executive Briefing Generator for actionable reports. DAIA operates through three Gemini-powered agents: Agent 1 (Analyst): Performs statistical anomaly detection across regions (NA, EU, APAC), platforms (Trader, MT5), and instruments (Synthetics, FX, Stocks) using rolling baselines, percentage deviations, and z-scores. Agent 2 (Reporter): Generates executive briefing reports with Market Overview, Regional Drivers, Risk Commentary, and prioritized Action Items with timelines. Reports are downloadable. Agent 3 (Advisor): Interactive chat interface for follow-up questions, root cause analysis, and investigation path exploration using live analysis data. The platform features a healthy-to-anomaly state transition. By default, the dashboard shows all systems nominal. When new telemetry is uploaded, the system processes it in real-time — KPI cards turn red, trend charts reveal drops, regional charts expose impacted areas, and an investigation path traces the anomaly from region to platform to instrument. Key features: live CSV upload with Gemini analysis, 28-day ADU trend chart, dynamic regional and instrument charts, auto-generated executive reports, and persistent AI chat for deep-dive investigation. The backend uses Python/Streamlit with 3-tier anomaly detection. The frontend uses React, TypeScript, Recharts, and Gemini API via Google AI Studio. DAIA transforms raw business telemetry into executive intelligence — turning data noise into actionable decisions within seconds.

7 Feb 2026

.png&w=640&q=75)