Top Builders

Explore the top contributors showcasing the highest number of app submissions within our community.

NVIDIA

NVIDIA Corporation is a global leader in accelerated computing, specializing in the design of graphics processing units (GPUs) for the gaming, professional visualization, data center, and automotive markets. As a pioneer in parallel computing, NVIDIA has been instrumental in the advancement of artificial intelligence, providing the foundational hardware and software platforms that drive modern AI research and deployment.

| General | |

|---|---|

| Author | NVIDIA Corporation |

| Release Date | 1993 |

| Website | https://www.nvidia.com/ |

| Documentation | https://docs.nvidia.com/ |

| Technology Type | Hardware / AI |

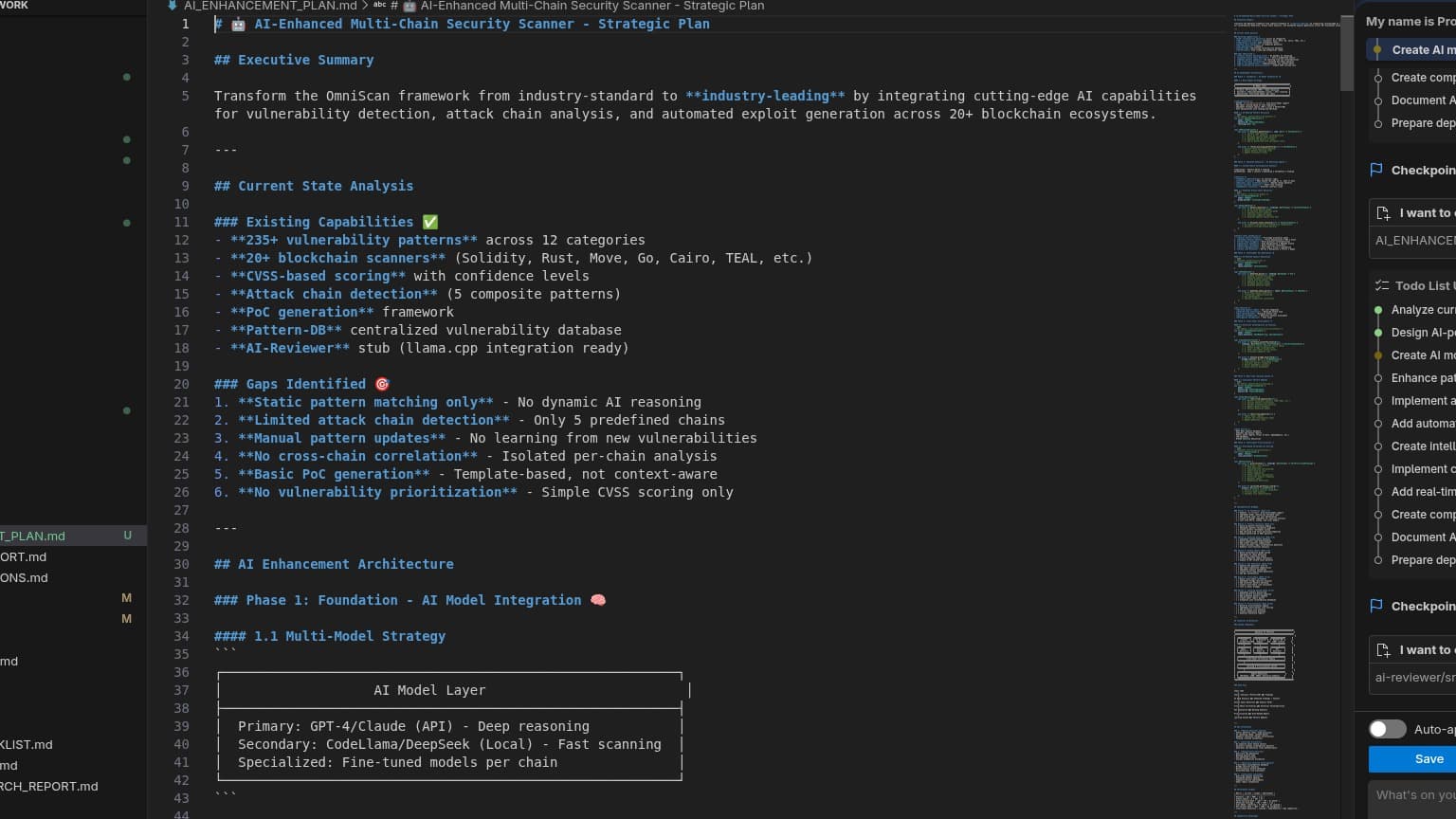

Key Products and Technologies

- GPUs (Graphics Processing Units): High-performance processors essential for parallel computing tasks in AI, machine learning, and deep learning.

- CUDA Platform: A parallel computing platform and programming model that enables significant performance gains by harnessing the power of GPUs.

- NVIDIA AI Software Suites: Comprehensive collections of tools and frameworks, such as NVIDIA NeMo for large language model development and deployment, and NVIDIA TensorRT for high-performance deep learning inference.

- NVIDIA Jetson: Edge AI platform for autonomous machines, robotics, and embedded systems.

- NVIDIA Omniverse: A platform for 3D design collaboration and simulation, facilitating the development of virtual worlds and digital twins.

Start Building with NVIDIA

NVIDIA's ecosystem of hardware and software is critical for accelerating AI development and deploying high-performance computing solutions. From data centers to edge devices, NVIDIA technology powers a vast array of AI applications, including agent lifecycle management with tools like NeMo. Developers are encouraged to explore the extensive documentation and resources available to leverage NVIDIA's capabilities for their projects.

NVIDIA AI Technologies Hackathon projects

Discover innovative solutions crafted with NVIDIA AI Technologies, developed by our community members during our engaging hackathons.

.png&w=3840&q=75)