🤓 Latest Submissions

Conduit

Conduit is an open-source browser-native shadow-AI governance layer. It catches corporate data the moment it's pasted into a public LLM (ChatGPT, Claude, Gemini, Copilot, Perplexity), inspects the prompt through Veea's Lobster Trap, classifies sensitive content with Google's Gemini, and offers the employee a sanitized version they can actually use. Every event becomes a regulator-readable audit entry. Components: 1. Chromium MV3 extension that intercepts paste/submit events on AI domains 2. FastAPI backend that orchestrates Lobster Trap inspection + Gemini classification + sanitization 3. Next.js dashboard for the CISO audit view

19 May 2026

Sentinel - Compliance Engineering Copilot

Sentinel is a compliance engineering copilot for healthcare and fintech teams. It turns HIPAA, SOC 2, PCI, and GDPR compliance from a periodic, $50K external audit cycle into a continuous, $0.03-per-PR CI gate. It ships three coordinated agentic surfaces: (1) a Bob IDE skill pack: five custom modes (hipaa-auditor, soc2-auditor, pci-auditor, phi-tracer, remediation-engineer) that load into any team's Bob IDE, where /audit-hipaa, /trace-phi, and /remediate <id> run inline; (2) a GitHub Actions agent: on every pull request, sentinel watch scans only the changed files, posts a sticky comment with a severity table and inline diff annotations, and fails the check on critical findings, so non-compliant code stops merging (3) a CLI + Next.js dashboard: sentinel init for one-command install, sentinel scan / remediate for batch audits, and a dashboard with a PHI data-flow graph, per-PR verification badges, and remediation patches with cross-file blast-radius analysis. Sentinel doesn't just audit, it also verifies its own fixes: after each remediation patch is applied, Sentinel re-audits the patched files and labels every fix verified-resolved, partial, regression, or neutral. The agent closes its own loop. Across 12 IBM Bob IDE task sessions ($18.72 / 40 Bobcoins, ~47% of the hackathon allocation), Bob designed every HIPAA / SOC 2 / PCI / GDPR control, wrote the test suite (38 cases, all passing), built the watch agent, the init wizard, the verification loop, and generated 30 per-control reference documentation pages. Every shipped feature traces back to a Bob IDE task ID. Receipts in bob_sessions/.

17 May 2026

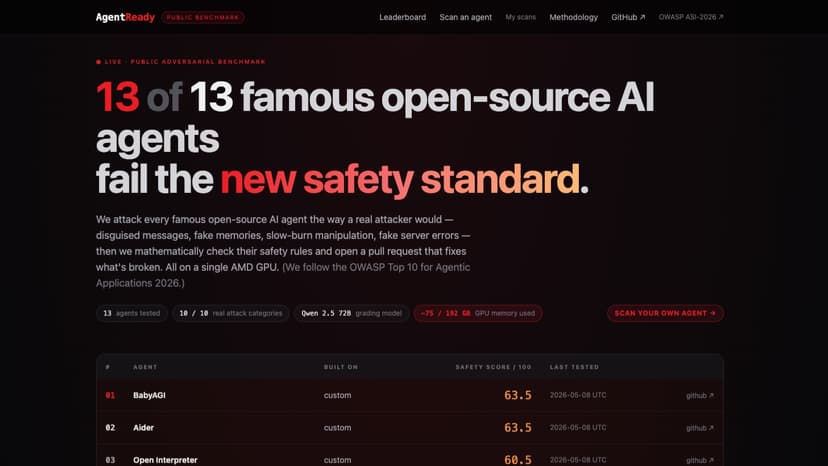

AgentReady

AI agents are like helpful robots that read your messages and do things for you, sending emails, writing code, even moving money. They're getting really popular. But there's a problem: they're easy to trick. OWASP, the people who write safety rules for the internet, just published a list of 10 ways to trick AI agents. December 2025. Brand new. And nobody had built a public tool to check if your agent could be tricked. So I built one. It uses two AI robots that run side by side on a single AMD MI300X GPU. The small robot pretends to be a sneaky attacker. It tries about 46 different tricks per agent, fake memos, fake calendar invites, fake messages that look like they came from another robot, slow-burn manipulation over multiple turns. The big robot reads each conversation and decides if the trick worked. Then I pointed it at every famous open-source AI agent on GitHub: BabyAGI, Cline, AutoGPT, Aider, AutoGen, CrewAI, and more. All 13 got tricked at least somewhat. Zero made it to the safe zone. But it doesn't stop there. For every trick that worked, the big robot writes new safety rules to block it. Then we run the same tricks again on the patched agent and watch the score go up. Same tricks, better defense proof, not vibes. Finally we open a real pull request on the agent's GitHub so the maintainer can merge the fix in one click. Why AMD MI300X? The big robot needs about 40 GB of GPU memory. The small robot needs about 16. Both running at once needs around 75 GB. The MI300X has 192 GB, plenty. The biggest single Nvidia H100 has only 80 GB, not enough.

10 May 2026

.png&w=256&q=75)