🤝 Top Collaborators

🤓 Latest Submissions

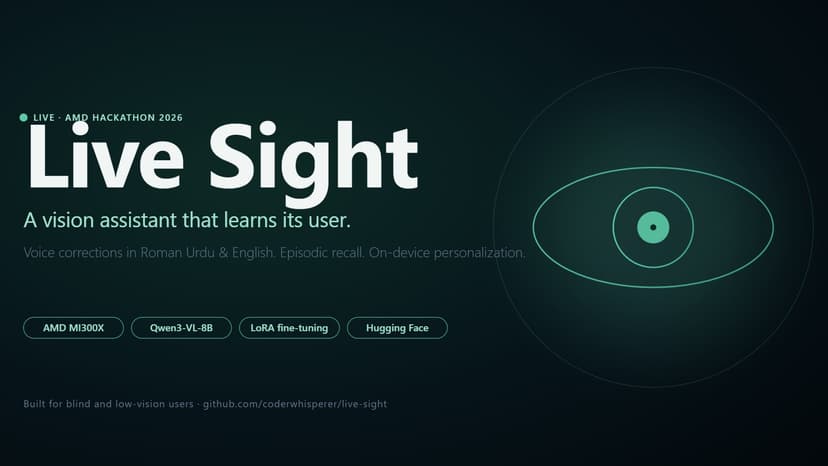

Live Sight

Live Sight is a vision-assistance app for blind and low-vision users that learns from each user's corrections and remembers what they've seen. It runs Qwen3-VL-8B on an AMD MI300X via vLLM, with FastAPI orchestrating four interaction modes — Navigate, Read, Scene, and Ask — plus episodic Recall over the user's interaction history. The personalization story is honest: when the model misreads a word ("Kumon" → "Kuman" on our test user's note) or mishears a name, the user records a Roman Urdu or English correction. Whisper transcribes it on-device, the correction is saved to the JSONL log, and a nightly LoRA fine-tune on AMD MI300X learns from accumulated corrections. The shipped adapter is v1.2, trained on 22 corrections from 100+ interactions over 5 days — it preserves prior corrections (Kumon→Kuman) while learning new ones (RAKtherm signage). We also tried v1.1 on the full corpus and documented why it regressed; the lesson — focused signal beats more data at fixed compute — is in the iteration log. Recall is RAG over a multilingual MiniLM-L12-v2 sentence-transformers index in sqlite-vss — "where did I put my keys?" returns the most recent matching scene with timestamp. The frontend is a React PWA with a tap-to-toggle voice flow, deployed as a Hugging Face Static Space. The backend tunnels to AMD Developer Cloud via cloudflared. Built in Karachi over 8 days.

10 May 2026

Oracle Mesh

OracleMesh is a pay-per-answer API for LLM inference. Send a prompt; Gemini 3 Flash classifies it via function-calling and routes it to the cheapest open-source specialist on Featherless that can answer well — OpenBioLLM for clinical questions, Saul for legal, Suzume for translation, Qwen Coder for code, and so on. The result returns to the caller; payment is settled in sub-cent USDC on Arc Testnet via Circle's batched x402 facilitator. No subscription, no credits, no minimums — every call is an atomic financial transaction. Per-call pricing under one cent breaks every traditional rail. Stripe takes $0.30 per transaction; an ERC-20 transfer on Ethereum L1 costs $0.20+ in gas. The same $0.003 inference would lose the seller money on either rail. On Arc + Circle Nanopayments, buyer-side gas is effectively zero (USDC is the native asset, authorizations are batched off-chain and settled in bulk on-chain), so the seller nets the full revenue. We've completed 362 paid inferences on Arc Testnet during the build, all auditable via Circle's transfer-search API and reproducible with `npm run evidence`. That's 7× over the hackathon's 50-tx threshold. Two endpoints, two pricing models, three Circle tracks. `/infer` is flat-priced ($0.0095 ceiling) for the Per-API Monetization track. `/infer/metered` quotes a per-call dynamic price from the routed model + estimated tokens for the Usage-Based Compute Billing track. A small marketplace UI (browser → server-held wallet → x402 settle → answer renders) covers the Real-Time Micro-Commerce track. Routing diversity (6+ specialists invoked across a 100-call burst) covers the Featherless Specialized Routing track. Strict-schema function calling in `router.ts` covers the Gemini Function Calling track. The submission also pushes substantive product feedback for the Circle Product Feedback Incentive ($500), including a working prototype (`txs.ts`) for the missing batched-settlement explorer view we wanted Circle to ship.

26 Apr 2026

.png&w=256&q=75)

.png&w=640&q=75)