AQ Asad@AQ_Asad

1

Events attended

1

Submissions made

Saudi Arabia

1 year of experience

Socials

🤝 Top Collaborators

🤓 Latest Submissions

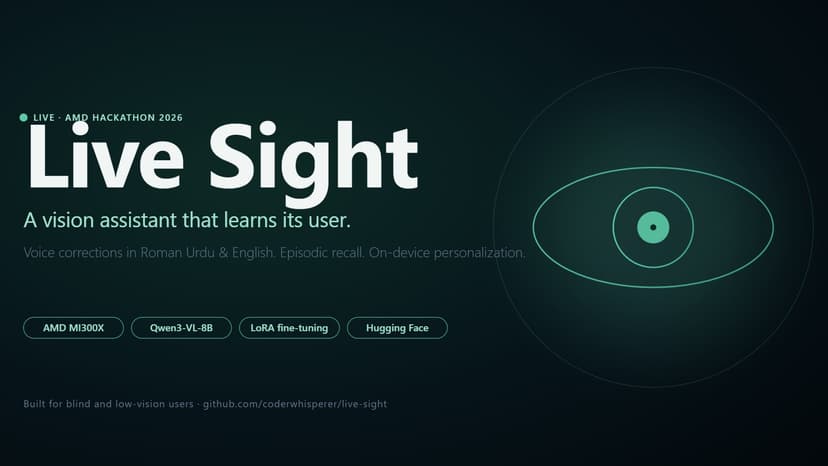

Live Sight

Live Sight is a vision-assistance app for blind and low-vision users that learns from each user's corrections and remembers what they've seen. It runs Qwen3-VL-8B on an AMD MI300X via vLLM, with FastAPI orchestrating four interaction modes — Navigate, Read, Scene, and Ask — plus episodic Recall over the user's interaction history. The personalization story is honest: when the model misreads a word ("Kumon" → "Kuman" on our test user's note) or mishears a name, the user records a Roman Urdu or English correction. Whisper transcribes it on-device, the correction is saved to the JSONL log, and a nightly LoRA fine-tune on AMD MI300X learns from accumulated corrections. The shipped adapter is v1.2, trained on 22 corrections from 100+ interactions over 5 days — it preserves prior corrections (Kumon→Kuman) while learning new ones (RAKtherm signage). We also tried v1.1 on the full corpus and documented why it regressed; the lesson — focused signal beats more data at fixed compute — is in the iteration log. Recall is RAG over a multilingual MiniLM-L12-v2 sentence-transformers index in sqlite-vss — "where did I put my keys?" returns the most recent matching scene with timestamp. The frontend is a React PWA with a tap-to-toggle voice flow, deployed as a Hugging Face Static Space. The backend tunnels to AMD Developer Cloud via cloudflared. Built in Karachi over 8 days.

10 May 2026

.png&w=640&q=75)

.png&w=640&q=75)