Top Builders

Explore the top contributors showcasing the highest number of app submissions within our community.

Streamlit: Effortless Front-Ends for Your Data Apps

Streamlit is a pioneering technology provider that specializes in turning data scripts into shareable web apps with minimal effort. Launched in 2018, Streamlit has gained popularity for its ease of use and efficiency, empowering data scientists and developers to create and deploy data-driven applications swiftly.

| General | |

|---|---|

| Author | Streamlit |

| Repository | https://github.com/streamlit/streamlit |

| Type | Framework for ML and data science apps |

Key Features

- Transforms Python scripts into interactive apps with simple annotations, dramatically reducing development time.

- Facilitates real-time interactivity directly from Python code without requiring front-end expertise.

- Supports hot-reloading, allowing instant app updates as the underlying code changes.

- Provides built-in support for a wide array of widgets, enabling the addition of interactive features without additional coding.

Start building with Streamlit's products

Streamlit offers a range of features designed to simplify the process of app creation and deployment, enhancing productivity in data science and machine learning fields. Explore how you can leverage Streamlit to turn your data projects into interactive applications. Don’t forget to check out the innovative projects built with Streamlit at various tech meetups!

List of Streamlit's products

Streamlit Library

The Streamlit Library allows developers to quickly convert Python scripts into interactive web apps. This library is packed with easy-to-use functionalities that make it straightforward to add widgets, charts, maps, and media files, transforming complex data science projects into user-friendly applications.

Streamlit Sharing

Streamlit Sharing provides the hosting infrastructure to share Streamlit apps with the world. It simplifies deployment, enabling users to go from script to app in minutes on a secure and scalable platform.

Streamlit for Teams

Streamlit for Teams is designed for collaboration and enterprise usage, offering additional features like integration with existing databases, advanced security protocols, and customized control for managing user access and data privacy.

System Requirements

Streamlit is compatible with Linux, macOS, and Windows systems, requiring Python 3.6 or later. It typically runs with minimal hardware requirements, though performance scales with available resources. For optimal performance, a modern processor and sufficient RAM are recommended, with a stable internet connection for deploying apps using Streamlit Sharing. Modern browsers with JavaScript support are required to view and interact with the apps.

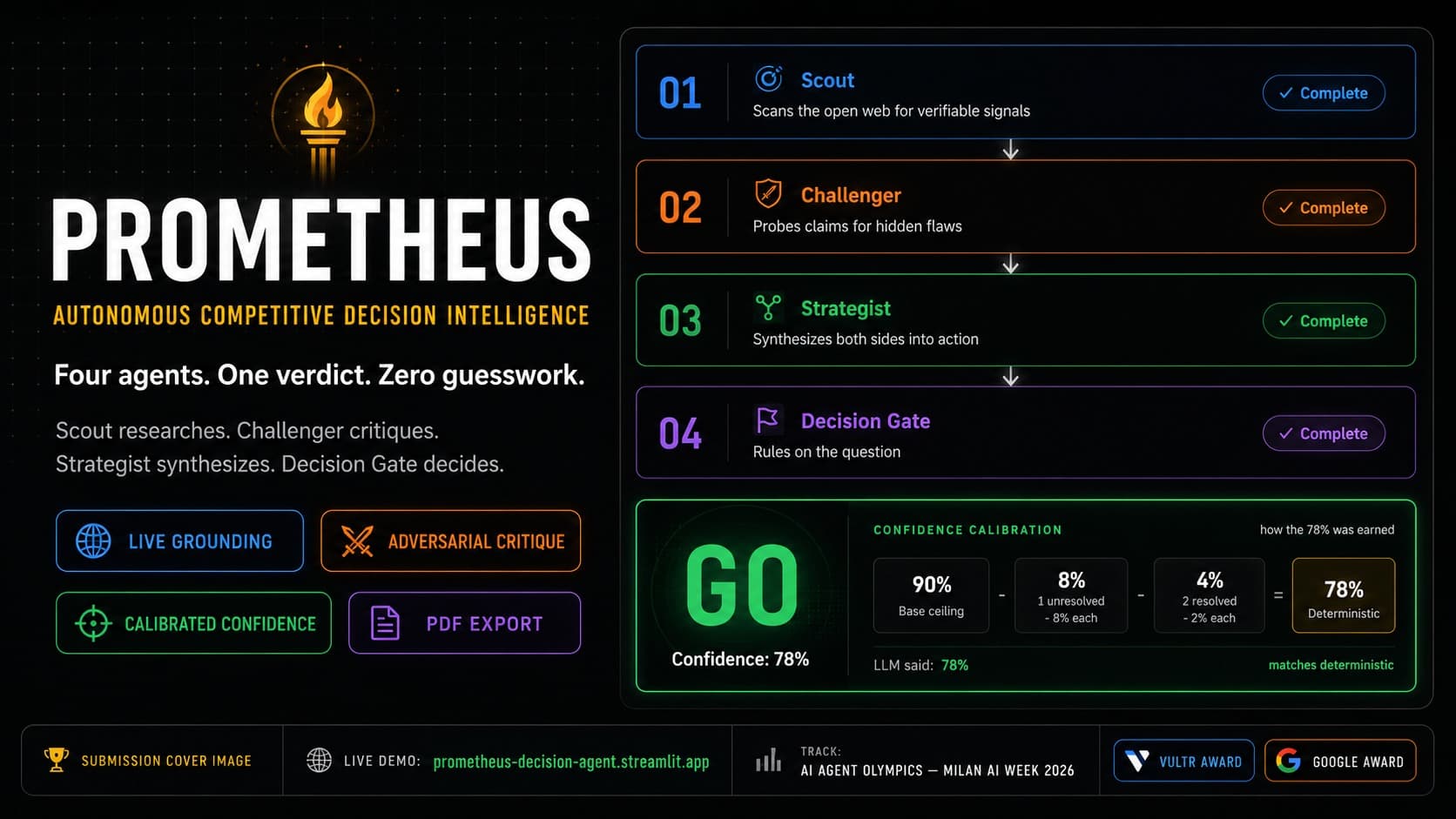

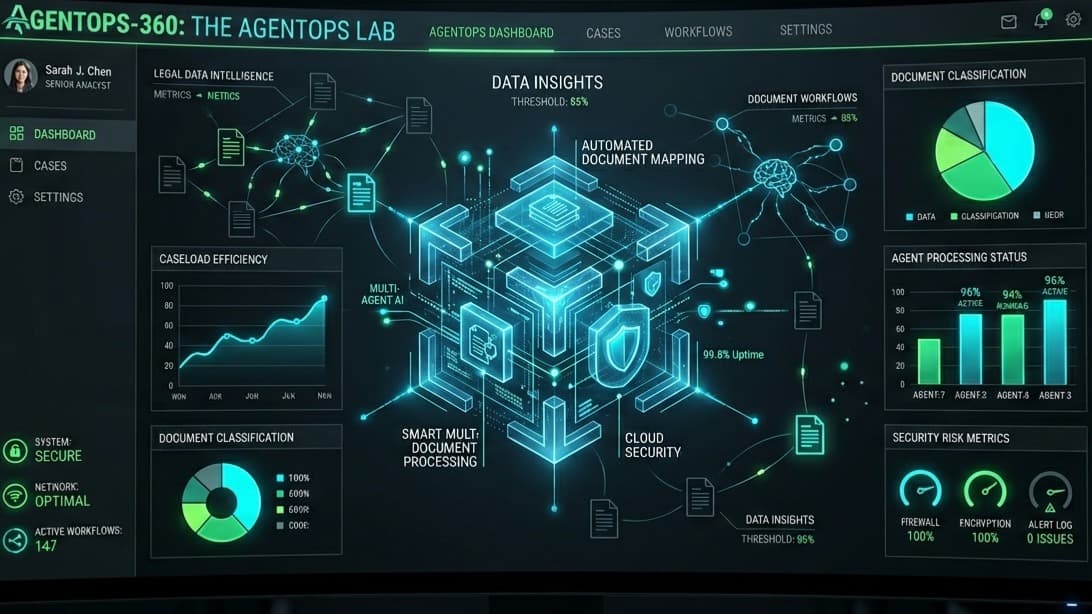

Streamlit AI technology page Hackathon projects

Discover innovative solutions crafted with Streamlit AI technology page, developed by our community members during our engaging hackathons.