Top Builders

Explore the top contributors showcasing the highest number of app submissions within our community.

Qwen3

Qwen3 is the third-generation text model family from Alibaba Cloud's Qwen team, released on April 28, 2025. It covers six dense sizes (0.6B to 32B) and two MoE variants, all trained on approximately 36 trillion tokens across 119 languages. A key design choice is a unified thinking and non-thinking mode in every model, so developers can choose between step-by-step reasoning and fast single-pass responses without switching models.

| General | |

|---|---|

| Release date | 28 Apr 2025 |

| Developer | Qwen / Alibaba Cloud |

| Type | Open-weight text LLM family |

| License | Apache 2.0 |

| GitHub | QwenLM/Qwen3 |

| Hugging Face | huggingface.co/Qwen |

| Documentation | qwenlm.github.io/blog/qwen3 |

Core Features

- Thinking/non-thinking mode: every model supports both step-by-step chain-of-thought reasoning and direct response generation within a single checkpoint.

- Thinking budget: developers can set a token budget for the reasoning phase, allowing inference cost to be tuned per request.

- Long context: models at 4B and above support 131,072-token context windows; 0.6B and 1.7B support 32,768 tokens.

- Multilingual: pretrained on 119 languages and dialects.

- Apache 2.0: all weights are released for commercial use, fine-tuning, and redistribution.

Model Variants

| Variant | Total Params | Active Params | Context | Best for |

|---|---|---|---|---|

| Qwen3-0.6B | 0.6B | 0.6B | 32K | Edge and on-device |

| Qwen3-1.7B | 1.7B | 1.7B | 32K | Lightweight inference |

| Qwen3-4B | 4B | 4B | 128K | Balanced performance |

| Qwen3-8B | 8B | 8B | 128K | General tasks |

| Qwen3-14B | 14B | 14B | 128K | Higher accuracy |

| Qwen3-32B | 32B | 32B | 128K | Strong reasoning |

| Qwen3-30B-A3B | 30B | 3B | 128K | Efficient MoE |

| Qwen3-235B-A22B | 235B | 22B | 128K | Flagship MoE |

Benchmarks

The flagship Qwen3-235B-A22B model scores:

- AIME '24: 85.7

- AIME '25: 81.5

- LiveCodeBench v5: 70.7

- BFCL v3: 70.8

Tools and Resources

- Qwen3 Blog Post: official release notes and technical details.

- Hugging Face (Qwen): download model weights and GGUF files.

- Technical Report: arXiv paper covering architecture, training, and evaluation.

- Ollama: run Qwen3 models locally.

- Qwen API Platform: access models via OpenAI-compatible API.

Ecosystem and Integrations

- Available on Hugging Face Hub in both standard and GGUF formats.

- Accessible via Alibaba Cloud DashScope using an OpenAI-compatible endpoint.

- Supported by Ollama, LM Studio, and major inference frameworks including vLLM and llama.cpp.

- All sizes available for fine-tuning using standard supervised fine-tuning and RL pipelines.

Qwen3 weights are available immediately on Hugging Face. To access via API, generate a key on the Qwen API Platform and follow the Model Studio documentation.

Qwen Qwen3 AI technology Hackathon projects

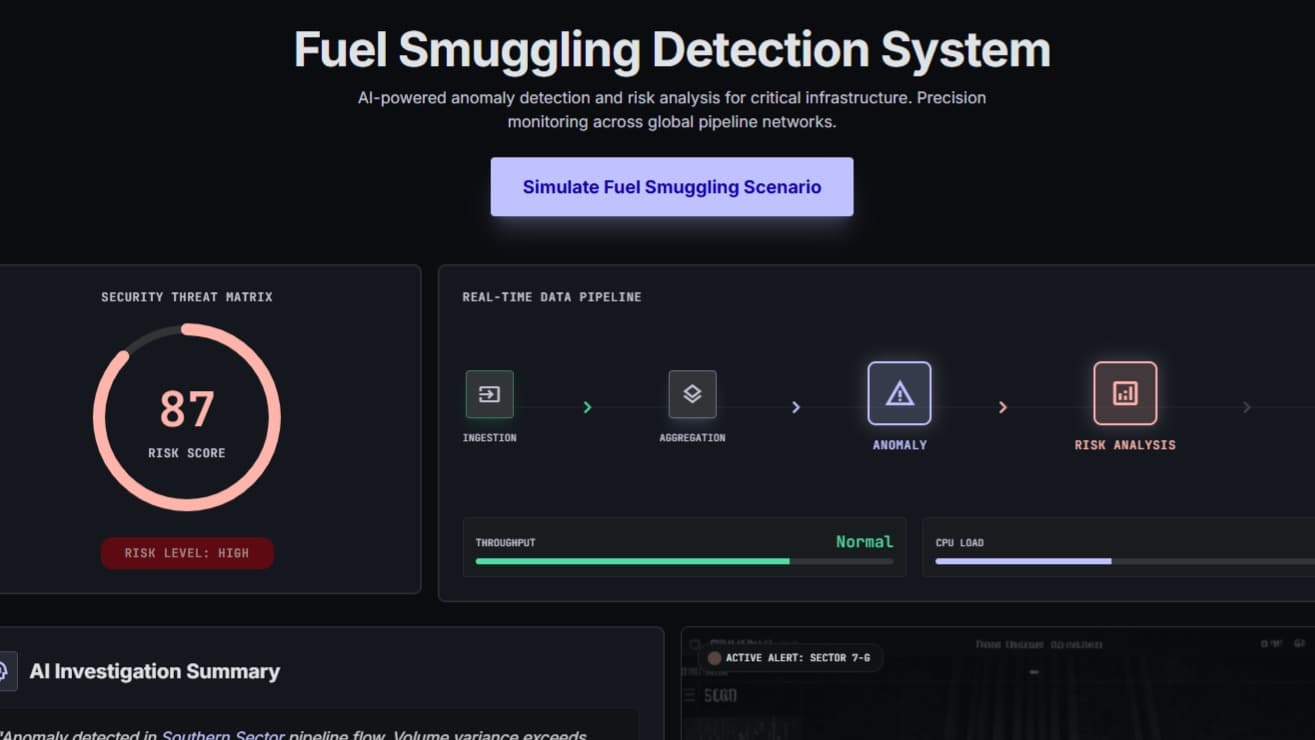

Discover innovative solutions crafted with Qwen Qwen3 AI technology, developed by our community members during our engaging hackathons.