Top Builders

Explore the top contributors showcasing the highest number of app submissions within our community.

Gemma

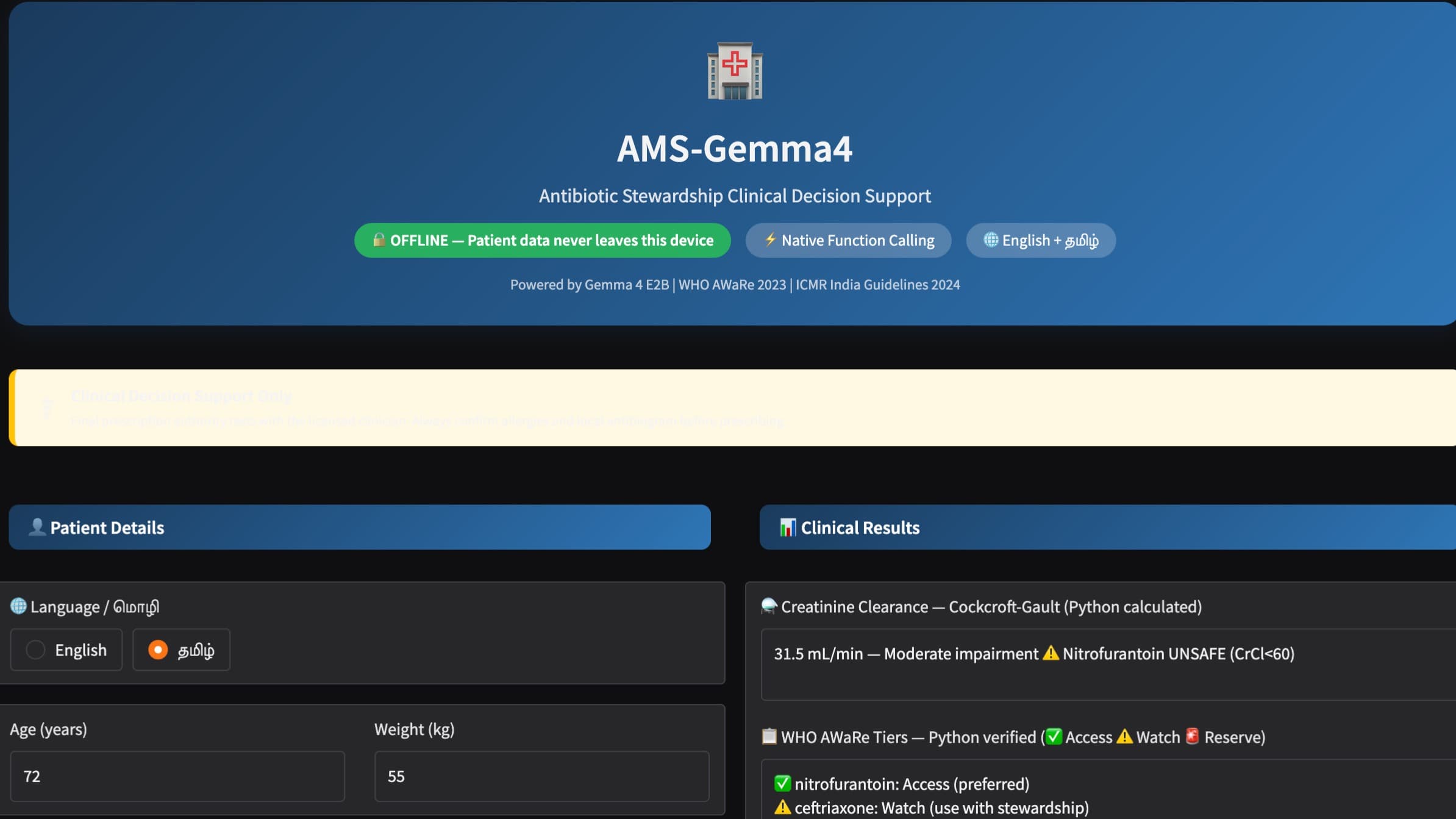

Gemma is a lightweight, open large language model (LLM) from Google, optimized for efficient AI applications. As part of the Google Gemma family, it uses a transformer-based architecture tailored for responsible and accessible AI usage. Developed as a foundational model, Gemma serves various basic language processing needs, including chatbots, content summarization, and multilingual support.

| General | |

|---|---|

| Relese date | February 2024 |

| Author | Google DeepMind in collaboration with Google AI teams |

| Website | [Google AI Gemma]https://ai.google.dev/gemma |

| Repository | Google AI Developer Resources |

| Type | Open-source AI, transformer-based LLM |

Key Features

-

Efficient Deployment: Available in parameter sizes like 2.5B and 7B, Gemma balances capability with efficiency, enabling deployments on both edge devices and cloud infrastructure.

-

Flexible Tuning Options: Offers pre-trained and instruction-tuned variants, allowing developers to optimize for specific use cases or deploy as-is.

-

Decoder-Only Transformer Architecture: Uses a streamlined decoder-only design, enabling Gemma 1 to process up to 8192 tokens in one pass for better handling of long-form text.

-

Safety and Accessibility Tools: Integrates responsible AI features, promoting transparency and safety in AI outputs.

Applications:

-

Chatbot Development: Optimized for conversational tasks, Gemma provides foundational capabilities for chatbot applications.

-

Summarization and Paraphrasing: Its pre-trained model structure makes it suitable for summarizing content across languages and contexts.

-

Multilingual Processing: Supports multilingual inputs, making it adaptable for global applications and translation services.

Get started building with Gemma:

Developers can quickly integrate Gemma into applications by accessing its model weights on Google AI Studio and Kaggle. The model’s lightweight design ensures that it can run efficiently on most hardware configurations, including mobile and edge devices. For optimal performance, utilize frameworks such as Keras or JAX to customize and deploy Gemma for your specific use case. Get started today by exploring the tools and resources available on the Google AI Gemma platform.

Google Gemma AI technology Hackathon projects

Discover innovative solutions crafted with Google Gemma AI technology, developed by our community members during our engaging hackathons.