🤓 Latest Submissions

NeuralMix

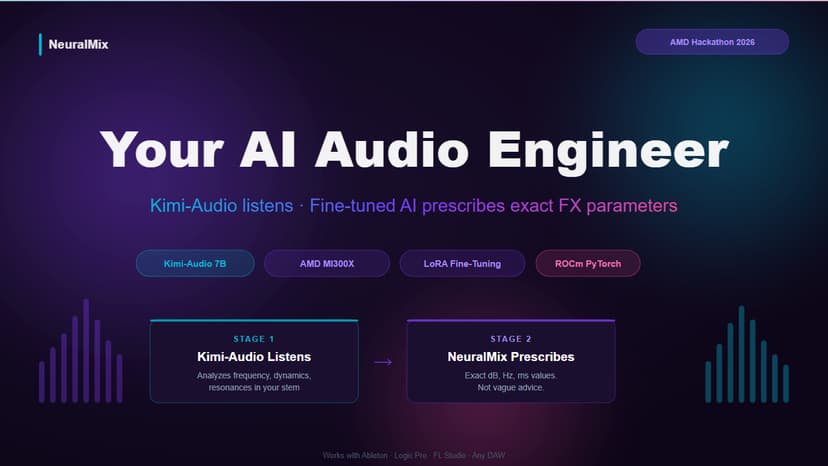

NeuralMix is a two-stage AI audio engineering system that makes professional music mixing accessible to every creator. THE PROBLEM Amateur music producers face a $200-800/track wall for professional mixing. Existing AI tools (LANDR, Moises) only output a stereo master with zero per-track control. General LLMs give vague advice like "add warmth" without any actionable parameters. THE SOLUTION NeuralMix uses a two-stage pipeline: Stage 1 — Kimi-Audio-7B-Instruct LISTENS to the uploaded stem. Pre-trained on 13 million hours of audio including music, it analyzes frequency balance, dynamic range, and detects specific mixing problems. Stage 2 — Our fine-tuned model PRESCRIBES exact FX parameters. Trained on AMD Instinct MI300X using ROCm PyTorch with LoRA fine-tuning on 183 professional audio engineering instruction-output pairs, it outputs specific values: EQ cuts in Hz, compressor ratios, attack/release in ms. WHY AMD MI300X The 192GB HBM3 VRAM enables both models to run without memory swapping, reducing stem analysis latency from 8 seconds (sequential CPU) to under 2 seconds (parallel GPU). This is the difference between a tool that disrupts creative flow and one that enhances it. REAL PRODUCT NeuralMix is built on top of an existing DAW-native mixing platform (neuralmix.vaclis.net) with real users including charting artists. The fine-tuned model ships directly into production after the hackathon. OPEN SOURCE Apache 2.0 license. All training code, dataset pipeline, and model weights published openly.

10 May 2026

.png&w=256&q=75)