🤝 Top Collaborators

🤓 Latest Submissions

path_to_care

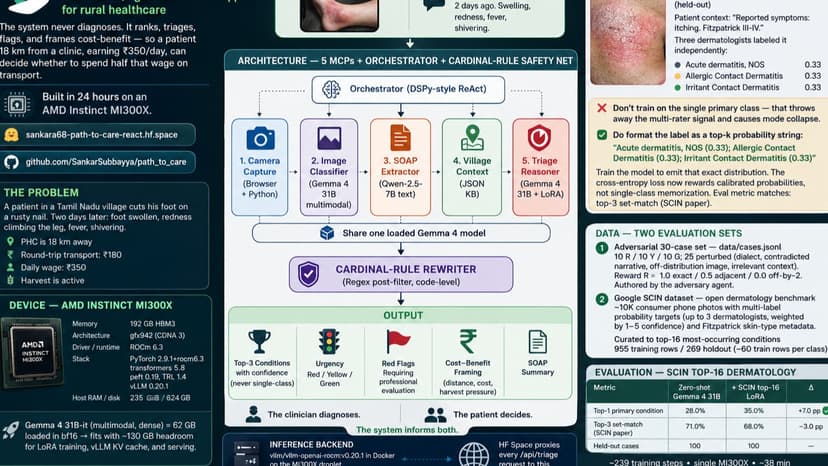

Path to Care is a multimodal, agentic decision-support system for rural healthcare — built in 24 hours on one AMD Instinct MI300X. A patient 18 km from the clinic, earning ₹350/day, faces ₹180 transport, an active harvest, and a swelling foot. Should he go? The system never tells him what's wrong — it helps him decide. **Cardinal rule (enforced in code).** Always "signs suggest", never "you have." Image output is always **top-3 with confidence** — single-class is impossible by construction. A regex post-filter rewrites diagnostic phrasing on every output, logged for audit. **What it does.** (1) Ranks skin conditions as top-3 + confidence; (2) assigns Red/Yellow/Green urgency; (3) flags red signs; (4) frames cost-benefit using local barriers (distance, wage %, harvest, drug-stock). Output: patient brief + pre-visit SOAP. **Architecture.** Five MCPs orchestrated by a DSPy-style ReAct loop: `camera_capture`, `image_classifier`, `soap_extractor` (Qwen-2.5-7B), `village_context`, `triage_reasoner`. Image-classifier and triage-reasoner share one loaded **Gemma 4 31B-it**. **Serving.** MI300X 192 GB, ROCm 6.3. `vllm/vllm-openai-rocm:v0.20.1` Docker, base + LoRA served simultaneously via `--enable-lora`. HF Space (Next.js/React) proxies every triage request to the droplet. **Fine-tuning.** LoRA on Google's SCIN dermatology dataset (top-16 conditions, top-k probability targets): **top-1 28.0% → 35.0% (+7.0 pp)** on a 100-case holdout. First positive delta after 3 documented failures — lesson: per-class samples > epochs. MedGemma 27B baseline scored 3 pp worse than Gemma 4 31B. **Frontend.** Patient / Clinician / Audit tabs · browser camera · Web Speech voice dictation · audit panel showing every MCP tool invocation. **v2.** Village fieldwork · 80-case set · physician review · Fitzpatrick-stratified bias audit · Tamil UX · GRPO/RLVR. 🤗 sankara68-path-to-care-react.hf.space · 💻 github.com/SankarSubbayya/path_to_care

10 May 2026

Midstream

Midstream solves a critical problem in AI: users pay upfront for output regardless of quality. Whether a research run drifts, code breaks, or an image is off-prompt, you've already paid full price. This wastes 30–70% of AI spending annually. Our solution: quality-gated streaming payments. The LLM generates output in 32-token chunks at $0.0005 each. Between chunks, the buyer's quality oracle (LLM, compiler, CLIP, etc.) assesses whether to continue paying. If quality drops below threshold, the buyer stops authorizing, the stream ends, and they pay only for the prefix that passed. We've built a working MVP on Circle Nanopayments + Arc testnet. Circle's batching achieves <1% overhead per chunk—impossible on Stripe ($0.30 minimum, 60,000% overhead) or Ethereum ($0.30 gas). Our demo shows: - Session 1: Full run, 31 chunks, $0.0155 - Session 2: Quality drop at chunk 13, killed, 60% saved - Session 3: Rapid drift at chunk 4, killed, 87% saved - Session 4: Compiler catches broken code at chunk 9, killed, 68% saved Total: 58 chunks paid, $0.0290 spent (vs. $0.0620 full run) = $0.0330 saved. We've verified 213 Circle Transfer UUIDs settled on Arc testnet via Circle Gateway batching. Every settlement is clickable on testnet.arcscan.app. The architecture is oracle-agnostic: works with any quality signal (text, code, image, video, schema validation, etc.). This is a platform, not a one-off.

26 Apr 2026

.png&w=256&q=75)

.png&w=640&q=75)