🤝 Top Collaborators

🤓 Latest Submissions

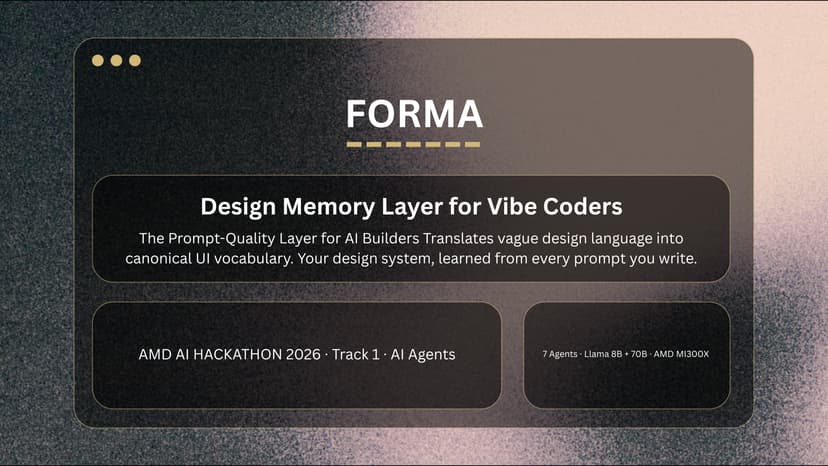

Forma — The Design Memory Layer for Vibe Coders

Forma is the design memory layer for vibe coders. When a user types "popup that slides in" on v0.app, Forma underlines the phrase inline (Grammarly-style), surfaces the canonical UI term ("Off-Canvas Drawer"), and rewrites the prompt with motion timing, layout anchor, and accessibility patterns. Forma score jumps from 60 to 95, and the AI builder generates dramatically better UI. The bottleneck in AI-generated software has moved from generation to specification; Forma owns the specification layer. Forma runs 7 agents on a single AMD MI300X. Five run on Llama 3.1 70B AWQ-INT4 (port 8000): Critic, Reformulator, Style, Memory Engine, and Iteration Coach — covering scoring, rewriting, design-system detection, personalization, and next-prompt suggestion. Two run on Llama 3.1 8B Instruct (port 30000): a fast Critic for per-keystroke scoring and a Detector for inline vague-phrase identification against our 60-component canonical library. This is why MI300X matters. 70B AWQ uses 53GB of HBM3, 8B uses 15GB, KV cache adds ~21GB — peak ~89GB. An H100 80GB cannot fit this concurrently; H100 teams must time-share GPUs or run separate instances. MI300X's 192GB HBM3 leaves ~100GB headroom for parallel users. The hardware capability is the product strategy: Forma's Free tier (8B real-time) and Pro tier (70B deep analysis) are the same decision as which models fit on the GPU. The Memory Engine is Forma's platform thesis. As users prompt across v0, Cursor, Lovable, Bolt, and base44, Forma builds a persistent design profile — style fingerprint, top components, predicted recommendations — that follows them everywhere. The 70B reads up to 4096 tokens of history (~12-25s latency) and outputs structured JSON. Live endpoint: /agents/memory-deep. Honest delineation: inference is real today; cross-builder data pipeline is mocked with 12 entries (real pipeline ships v1.1 with 32K context). Multi-site is v1.1; today's surface is v0.app. Full delineation in README.

10 May 2026