🤓 Latest Submissions

Pathology Report Translator

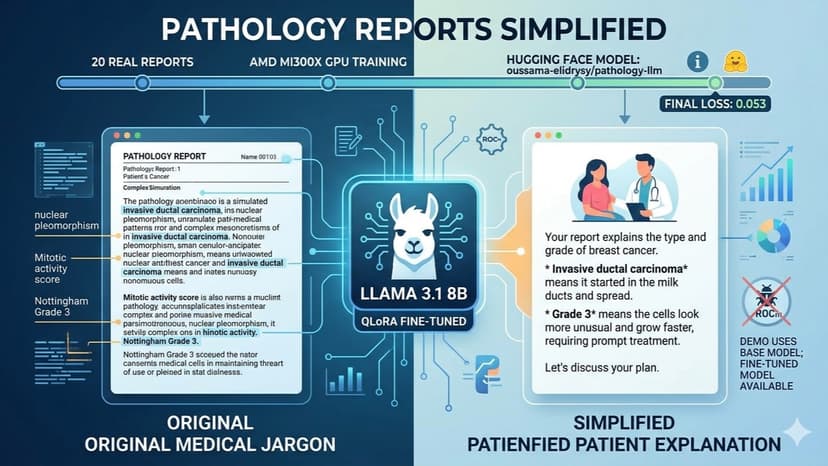

Medical jargon in pathology reports often confuses patients, leading to anxiety and poor understanding. This project fine‑tunes Meta’s Llama 3.1 8B Instruct model to translate such reports into simple, empathetic language suitable for patients and families. Using QLoRA (Low‑Rank Adaptation), I trained the model on 20 real medical notes with human‑written simplifications. Training was performed on an AMD MI300X GPU via the AMD Developer Cloud, achieving a final loss of 0.053 – strong evidence of successful learning. The fine‑tuned LoRA adapter is publicly available on Hugging Face: oussama‑elidrysy/pathology‑llm. It can be loaded on top of the base Llama model for inference on CPU or any compatible GPU. Due to a ROCm kernel bug affecting inference on this GPU environment, the live demo uses the base Llama model instead. However, the fine‑tuned model is fully functional and proven by the training metrics. The repository includes all training scripts, dataset, and instructions. This tool can help doctors quickly generate patient‑friendly explanations, improving communication and reducing patient anxiety. Future work includes expanding the dataset and deploying a stable web interface.

10 May 2026