🤓 Latest Submissions

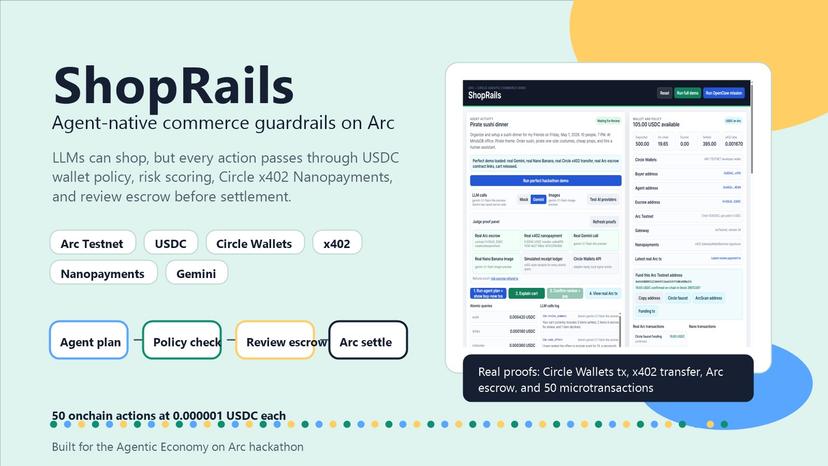

ShopRails

ShopRails is an agent-native commerce rail built for the Agentic Economy on Arc hackathon. It lets an LLM shop across merchant surfaces while buyer policy, risk scoring, Circle Wallets, x402 nanopayments, and Arc USDC escrow decide whether each item is bought now, held for review, or declined. The demo starts with a 500 USDC buyer wallet and a shopping request for a pirate sushi dinner. OpenClaw decomposes the request into atomic catalog queries, including a real Circle x402 payment for premium catalog data. Low-risk items settle immediately on Arc, higher-risk or human-service items move into a payable escrow contract, and blacklisted sellers or brands are declined before any signing path. The judge proof panel separates live proof from simulated rows: Gemini calls, generated product images, a real x402 transfer, ArcScan escrow transactions, Circle Wallets setup, and cached proof files so repeated demos do not accidentally duplicate spend. The core point is economic: agents need per-action prices, policy control, and verifiable settlement instead of unsafe card autofill or subscriptions.

26 Apr 2026

NewsFacts

Motivation / Problem Firsthand news facts are hard to monetize directly and quickly. AI agents need verifiable sources with clean payment trails. Impact Turns micro-facts into paid, citable sources. Demonstrates agentic commerce with USDC micropayments on Arc. Solution UI-first NewsFacts Studio for journalists to submit short facts. Free search + paid fact detail using x402 via Circle Gateway. MCP server for Gemini CLI to search and pay for facts with citations. Tech Stack Node.js 20, TypeScript + tsx Express 5 Circle x402 batching SDK (@circlefin/x402-batching) Local embeddings with @xenova/transformers (used when LLM phase is enabled) MCP SDK for Gemini CLI integration

24 Jan 2026

.png&w=828&q=75)

Synth Dev

## Problem 1. AI coding assistants (Copilot, Cursor, Aider.chat) accelerate software development. 2. People typically code not by reading documentation but by asking Llama, ChatGPT, Claude, or other LLMs. 3. LLMs struggle to understand documentation as it requires reasoning. 4. New projects or updated documentation often get overshadowed by legacy code. ## Solution - To help LLMs comprehend new documentation, we need to generate a large number of usage examples. ## How we do it 1. Download the documentation from the URL and clean it by removing menus, headers, footers, tables of contents, and other boilerplate. 2. Analyze the documentation to extract main ideas, tools, use cases, and target audiences. 3. Brainstorm relevant use cases. 4. Refine each use case. 5. Conduct a human review of the code. 6. Organize the validated use cases into a dataset or RAG system. ## Tools we used https://github.com/kirilligum/synth-dev - **Restack**: To run, debug, log, and restart all steps of the pipeline. - **TogetherAI**: For LLM API and example usage. See: https://github.com/kirilligum/synth-dev/blob/main/streamlit_fastapi_togetherai_llama/src/functions/function.py - **Llama**: We used Llama 3.2 3b, breaking the pipeline into smaller steps to leverage a more cost-effective model. Scientific research shows that creating more data with smaller models is more efficient than using larger models. See: https://github.com/kirilligum/synth-dev/blob/main/streamlit_fastapi_togetherai_llama/src/functions/function.py - **LlamaIndex**: For LLM calls, prototyping, initial web crawling, and RAG. See: https://github.com/kirilligum/synth-dev/blob/main/streamlit_fastapi_togetherai_llama/src/functions/function.py

11 Nov 2024