🤓 Latest Submissions

Vortex Compressor Float32 Compression

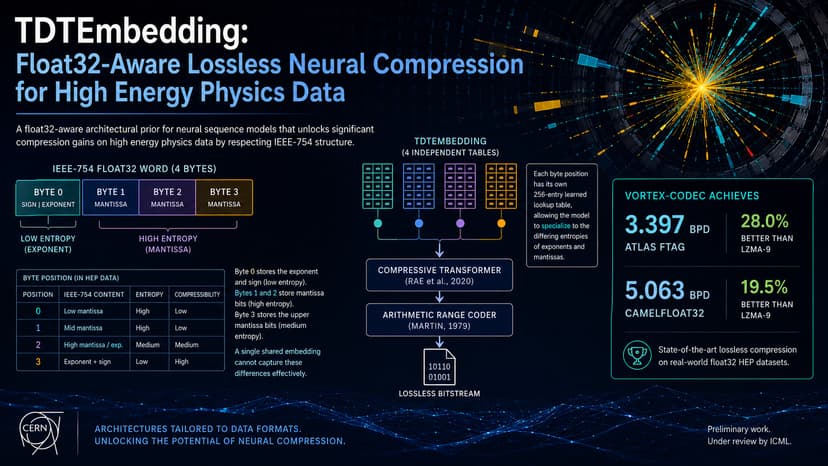

High Energy Physics (HEP) experiments produce massive amounts of detector data, generating around 15 PB per year. Storage capacity already constrains what can be retained long-term. Current pipelines rely on standard lossless compressors (like LZMA) that treat IEEE-754 float32 byte streams as unstructured data, ignoring the internal structural heterogeneity of floating-point formats. To solve this, we introduce Vortex Codec, featuring a novel architectural prior called TDTEmbedding (Type-Decomposed Token Embedding). Instead of a single shared lookup table, TDTEmbedding maintains four independent tables indexed by byte position modulo 4. This allows the neural sequence model to learn specialized representations for low-entropy exponent bytes and high-entropy mantissa bytes from scratch. We integrated this with a Compressive Transformer using Learnable Token Eviction (LTE) and an arithmetic range coder. Our 87M parameter model was trained and profiled using an MI300X GPU on the AMD Developer Cloud. The results establish state of the art archival efficiency: Vortex Codec achieves 3.397 and 5.063 bits-per-byte (BPD) on the ATLAS FTAG and CamelFloat32 benchmarks, representing a 28.0% and 19.5% improvement over LZMA-9.

10 May 2026

.png&w=256&q=75)