.png&w=256&q=75)

Fuyuto MIyake@fuyutothunder

🤓 Latest Submissions

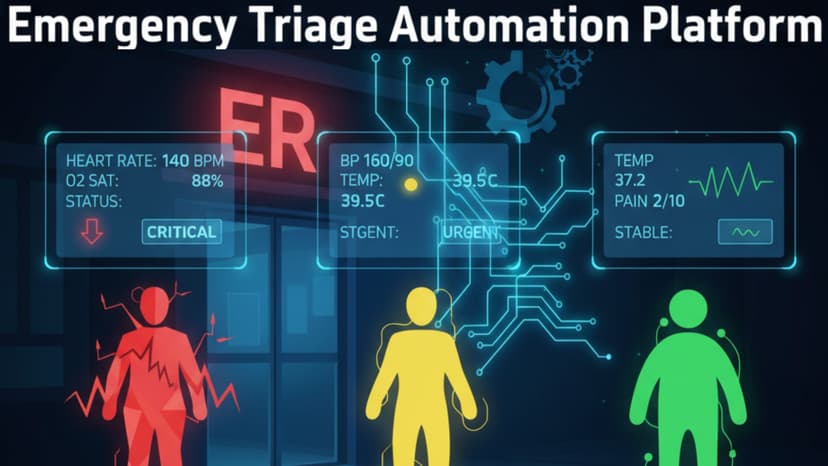

Emergency Triage Automation - Prioritizing Care

This project transforms a smartphone into a lightweight multimodal triage sensor and uses OPUS as the central decision engine for safe, auditable emergency triage. The PWA performs a 10-second on-device video analysis using MediaPipe Tasks and a lightweight rPPG pipeline built with WebAssembly/FFT to extract respiratory rate, heart rate, posture, respiratory effort, pallor, sweat, eye openness, facial asymmetry, and pain indicators. These structured features are assembled into a unified TriageRequestPayload and sent to an OPUS workflow designed around the full Intake → Understand → Decide → Review → Deliver lifecycle. In Intake, OPUS validates the schema and receives vitals, posture, face features, and the chief complaint. In Understand, Agent nodes detect vital-sign red flags, extract complaint keywords, and classify high-risk patterns such as severe respiratory effort or multiple unstable findings. Each Agent outputs structured fields with concise reasoning. In Decide, a Decision node aggregates all extracted information to assign a triage level (red/yellow/green) using explicit rules, while an Agentic Review node checks guideline consistency and highlights mismatches. For Review, OPUS routes only RED + high-risk cases to a Human Review node, creating a human-in-the-loop safety gate aligned with real emergency workflows. Reviewers receive extracted fields, triggered rules, and reasoning, and may confirm or modify the triage level with justification. In Deliver, OPUS generates a structured TriageResponsePayload containing the final triage level, a clinical summary, recommended next actions, and key debug fields. Each Job automatically produces an audit artifact capturing inputs, intermediate outputs, rule activations, timestamps, reviewer decisions, and model versions, enabling full provenance and compliance readiness. This system shows how OPUS can orchestrate a high-risk, high-volume medical workflow with transparency, safety, and practical deployability.

19 Nov 2025