🤓 Latest Submissions

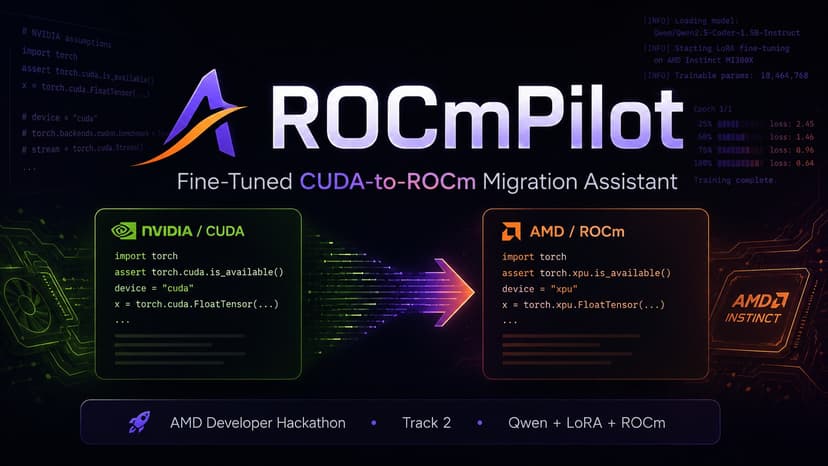

ROCmPilot

ROCmPilot is a fine-tuned AI code migration assistant for developers moving CUDA-first machine learning workloads to AMD ROCm. The project targets a real adoption barrier for AMD GPUs: many Python scripts, Dockerfiles, and error logs still assume NVIDIA/CUDA tooling, even when the workload itself could run on AMD Instinct accelerators. ROCmPilot is built on Qwen/Qwen2.5-Coder-1.5B-Instruct and fine-tuned with PEFT LoRA on an AMD Instinct MI300X instance using ROCm and PyTorch. I created a synthetic instruction dataset with 240+ CUDA-to-ROCm migration examples covering hardcoded CUDA devices, NVIDIA Docker base images, nvidia-smi vs rocm-smi checks, PyTorch ROCm verification, quantization dependency issues, and common migration errors. The final system is deployed as a Hugging Face Space. For reliability on free CPU hosting, the public demo uses a deterministic migration engine by default, while the actual fine-tuned LoRA adapter is published separately and can be enabled through live model mode. The goal is to make AMD ROCm migration faster, clearer, and less intimidating for ML engineers, DevOps teams, and developers adopting AMD GPUs.

10 May 2026