🤝 Top Collaborators

🤓 Latest Submissions

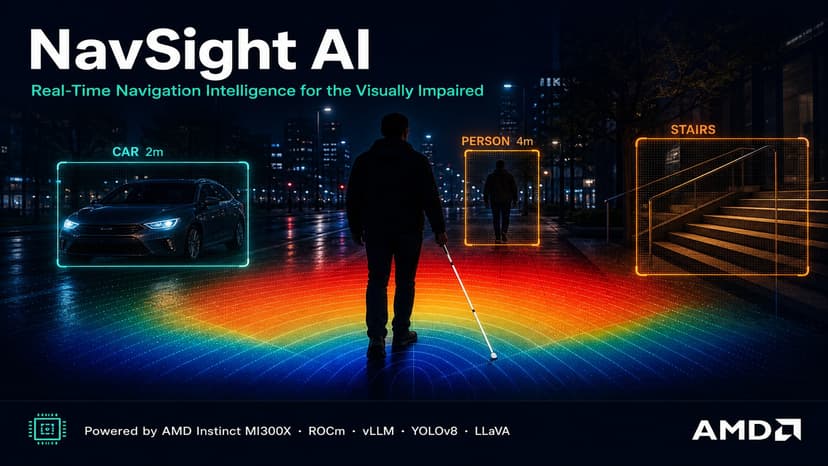

NavSight AI: Navigation for the Visually Impaired

2.2 billion people worldwide live with vision impairment, and 43 million are completely blind. Guide dogs' cost $25,000–$50,000 and take months to train. White canes detect obstacles within just 1 meter. Existing apps can label objects, but cannot understand complex scenes, judge distances, or warn you about a car turning toward you. NavSight AI is a real-time navigation assistant built on AMD Instinct MI300X with ROCm. Four specialized agents work in concert: Vision Agent (YOLOv8 + Depth Anything V2, ~25ms/frame); Scene Agent (LLaVA-NeXT 7B via vLLM, ~290ms contextual understanding); Navigation Agent (priority matrix, CRITICAL to LOW hazard ranking); and Communication Agent (spoken guidance, CRITICAL alerts interrupt mid-sentence). Core innovation: a dual inference path -- fast (every frame, ~25ms) and smart (every 3rd frame, ~290ms) -- both on one MI300X GPU using only ~15 GB of 192 GB HBM3. We solved a real ROCm gap: torchvision::nms is absent in ROCm builds, so we wrote fully GPU-native NMS in pure PyTorch -- zero CPU round-trips. NavSight also tracks object motion frame-to-frame (approaching/receding/crossing) and maintains surface state across frames so stair warnings persist throughout a descent. Validated: 4/4 hazard detection, 0 critical false negatives across 4 real-world scenarios. NavSight targets a $7.6B assistive tech market growing at 14% annually. Unlike Glidance ($999 hardware) or Be My Eyes (human volunteers), NavSight needs only a camera -- scalable at $5–10/month. Roadmap: mobile app on AMD Ryzen AI, GPS turn-by-turn navigation, multi-language TTS, community hazard mapping. Every person deserves to walk safely -- NavSight AI and AMD MI300X make it possible.

10 May 2026

.png&w=256&q=75)

.png&w=640&q=75)

.png&w=640&q=75)