🤝 Top Collaborators

🤓 Latest Submissions

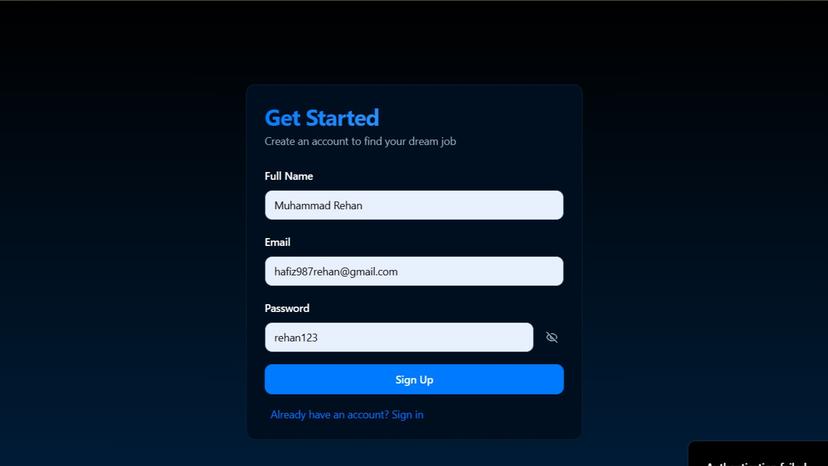

Job Finder

Job Finder System is a smart, automated solution built to streamline the job-search process by gathering job listings from various online platforms and presenting them in a clean, structured format. The system scans selected websites, extracts job information (such as title, salary, description, and company name), and filters results based on predefined criteria like skills, category, salary range, or location. The platform includes: Automated Web Scraping: Collects job postings from employment portals and company sites. Smart Filtering & Categorization: Sorts jobs based on relevance, skills, salary, or industry. Real-Time Updates: Frequently checks for new job opportunities and adds them to the system. User-Friendly Interface: Allows users to easily search, browse, or save job listings. Data Storage & Management: All job listings are stored in a database for fast access and tracking. This system reduces manual job searching and provides users with accurate, up-to-date career opportunities—making the job hunt faster, smarter, and more efficient.

7 Dec 2025

Medical Ai assistant

The lablab_project is a full-stack experimental application that combines a modern TypeScript-based frontend with a Python backend. It demonstrates how client-side interfaces can seamlessly interact with server-side logic to deliver a smooth and complete web experience. The frontend, built with TypeScript and styled with CSS, manages user interactions and rendering, while the Python backend handles APIs, data processing, and integrations. This separation of concerns makes the project modular, scalable, and easy to extend. The system can serve as a learning tool for students and developers to explore full-stack development, or as a prototype for production-ready solutions. With its mix of UI design, backend logic, and cross-platform integration, it showcases the power of combining modern web technologies into a single working application.

21 Sep 2025

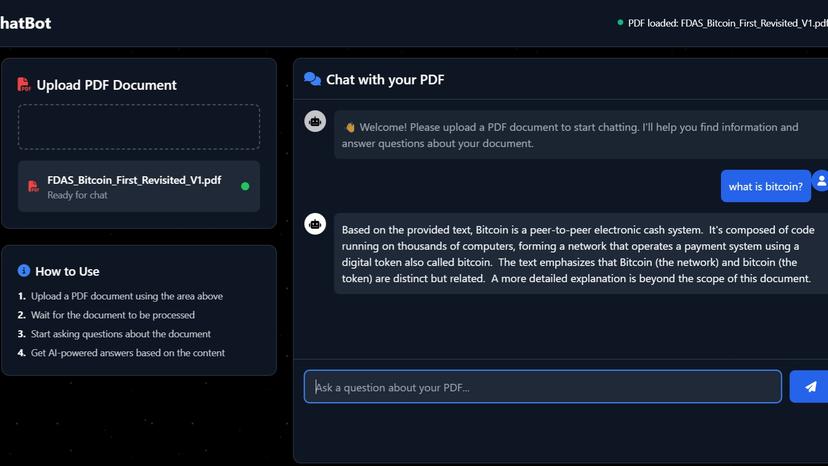

PDF ChatBot RAG

This application implements a powerful, interactive PDF chatbot using a modern Retrieval-Augmented Generation (RAG) pipeline built with LangChain, OpenAI, and deployed on Vercel. Here's how it works step by step: 1. Upload and Process 1:The interface welcomes users to upload a PDF—drag and drop or browse. 2:On upload, the system processes the PDF: -->It extracts content, potentially splitting it into smaller, manageable text chunks (a common RAG strategy) 2:The tool leverages LangChain, a toolkit to orchestrate language model workflows: 1:It loads the document content. 2: Breaks it into chunks. 3:Deployment & Architecture -->The app is hosted on Vercel, offering edge deployment and serverless execution. -->LangChain orchestrates the logic, from ingestion to retrieval to generation.

24 Aug 2025

.png&w=640&q=75)

.png&w=640&q=75)